使用虚拟机安装一个K8s集群

因阿里云加速服务调整,镜像加速服务自2024年7月起不再支持,拉取镜像,下载网络插件等操作,需要科学上网访问DockerHub。

安装全过程均使用ROOT权限。

1.安装前准备工作

这里采用3台CentOS虚拟机进行集群安装,安装前需要环境准备:

使用虚拟机VMware新建一个NAT类型网络(一般都会默认自带,只进行设置即可),我的起名叫VMnet8,设置VMnet8的子网IP为

192.168.228.0,子网掩码为255.255.255.0,网关地址为192.168.228.2,起止IP地址范围192.168.228.3-192.168.228.254,并勾选”将主机虚拟适配器连接到此网络”为物理机分配IP地址,一般会分配192.168.228.1给物理机。VMware安装3台Linux虚拟机,可以安装一台,然后完整克隆,这里我采用的安装镜像版本是CentOS-7-x86_64-Minimal-2009,安装完成后,设置网络为VMnet8,将IP获取方式由DHCP修改为静态,并将IP地址分别设置为

192.168.228.131,192.168.228.132,192.168.228.133,再分别设置网关,子网掩码,DNS,MAC地址,并保证MAC地址互相不重复。

系统下载地址 https://mirrors.aliyun.com/centos/7/isos/x86_64/

2.安装docker

K8s是个容器编排工具,需要容器环境,这里容器环境采用Docker,每台机器都要安装docker环境

3.安装K8s集群

3.1 安装条件

- 兼容的Linux发行版(Ubuntu,CentOS等等)

- 机器需要2GB内存,CPU2核及以上

- 集群中机器网络彼此互通

- 集群中不可有重复的主机名

- 集群中不可有重复的MAC地址

3.2 安装规划

主节点一台机器,从节点两台机器

主节点master

主机名:

k8s131, IP:192.168.228.131从节点node

主机名:

k8s132, IP:192.168.228.132

主机名:k8s133, IP:192.168.228.133

3.3 安装前设置

三台机器都要进行设置。

1.设置主机名

在对应IP地址的机器上分别设置主机名为k8s131,k8s132,k8s133

hostnamectl set-hostname xxx执行后,检查是否都设置好了

hostname会话exit退出后重连shell,就会看到shell的计算机名已经变成了自己设置的计算机名root@k8s131

Last login: Thu Nov 21 17:47:02 2024 from 192.168.228.1[root@k8s133 ~]# 2.关闭交换分区

使用free -m命令,可以查看交换分区情况,安装K8s前,需要关闭交换分区,且永久关闭

[root@localhost ~]# free -m total used free shared buff/cache availableMem: 1819 309 1166 9 342 1360Swap: 2047 0 2047执行命令,永久关闭交换分区(将Swap设置为 0 0 0)

swapoff -a sed -ri 's/.*swap.*/#&/' /etc/fstab3.禁用,并永久禁用SELinux

sudo setenforce 0sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config4.设置允许iptables检查桥接流量

将IPV6流量桥接到IPV4网卡上,是K8s官方要求的做法

cat <<EOF | sudo tee /etc/modules-load.d/k8s.confbr_netfilterEOFcat <<EOF | sudo tee /etc/sysctl.d/k8s.confnet.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1EOFsudo sysctl --system5.关闭防火墙

CentOS7默认打开防火墙firewalld,3台都需要关闭firewalld

systemctl stop firewalldsystemctl disable firewalld3.4 安装K8s组件

1.安装kubelet、kubeadm、kubectl

首先添加k8s软件包的yum源到三台机器

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=0repo_gpgcheck=0gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgexclude=kubelet kubeadm kubectlEOF三台机器依次执行安装命令

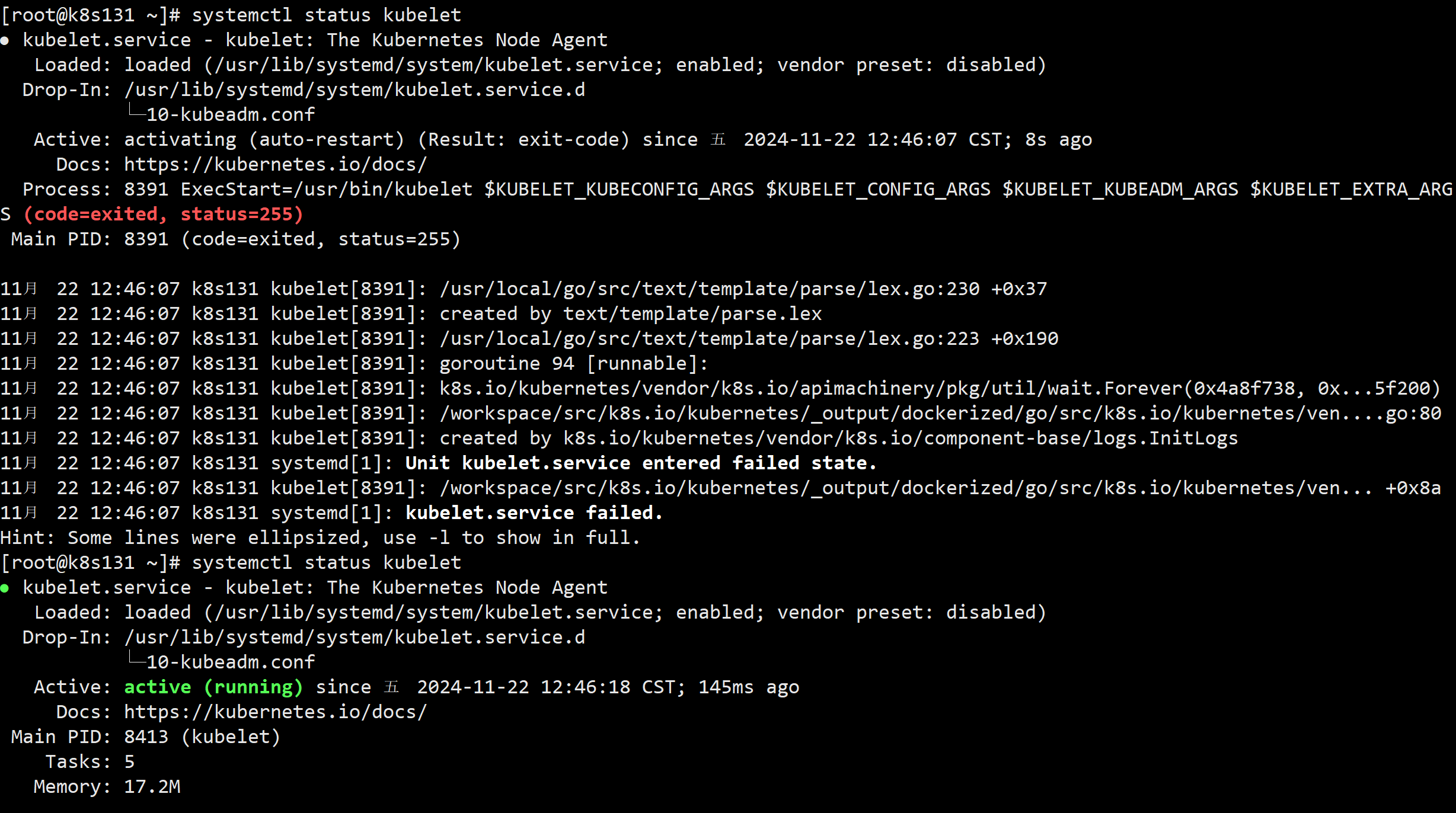

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 --disableexcludes=kubernetes三台机器都启动kubelet,并设置开机启动

sudo systemctl enable --now kubelet不停执行systemctl status kubelet会发现服务一直处于闪断状态,因为kubelet一直在等待

3.5 初始化主节点

使用kubeadm引导集群,初始化主节点。

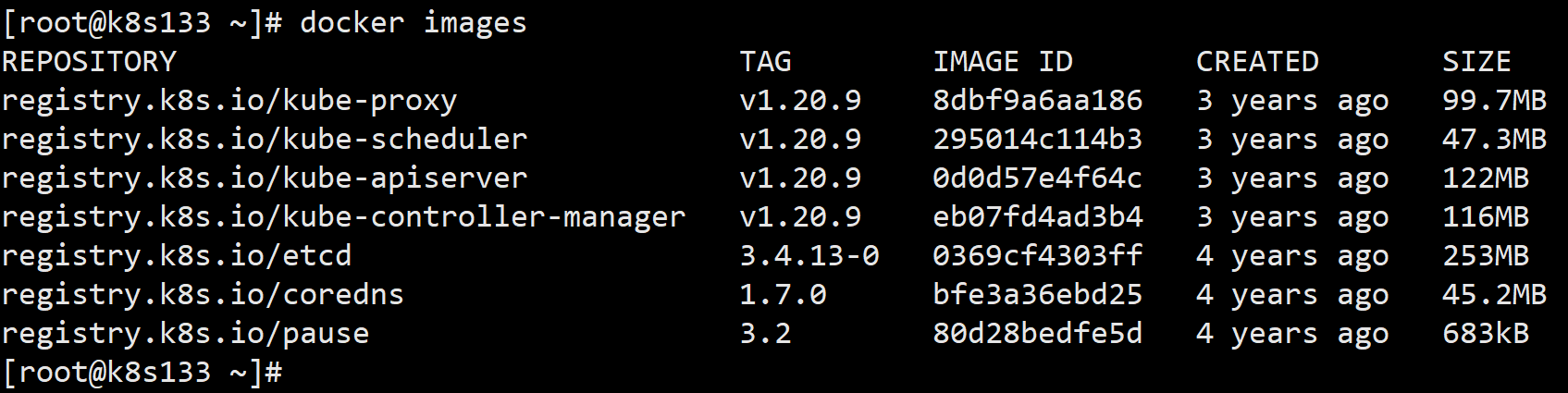

1.首先所有机器都要先下载需要的镜像

docker pull registry.k8s.io/kube-apiserver:v1.20.9docker pull registry.k8s.io/kube-proxy:v1.20.9docker pull registry.k8s.io/kube-controller-manager:v1.20.9docker pull registry.k8s.io/kube-scheduler:v1.20.9docker pull registry.k8s.io/coredns:1.7.0docker pull registry.k8s.io/etcd:3.4.13-0docker pull registry.k8s.io/pause:3.2下载完成后docker images验证

2.设置主节点域名映射

3台机器都必须添加master节点(又叫集群的入口节点)的域名映射,IP按实际情况修改。

echo "192.168.228.131 cluster-endpoint" >> /etc/hosts3.初始化主节点

在主节点所在机器上执行命令,初始化主节点

参数解释

--service-cidr,--pod-network-cidr两项设置的网络范围不能重叠,也不能同服务器所在网络范围重叠。--apiserver-advertise-address改成自己主节点的IP。--control-plane-endpoint改为自己设置的主节点域名。

命令

kubeadm init \--image-repository registry.k8s.io \--apiserver-advertise-address=192.168.228.131 \--control-plane-endpoint=cluster-endpoint \--kubernetes-version=v1.20.9 \--service-cidr=10.96.0.0/16 \--pod-network-cidr=192.168.23.0/24 等待初始化完成后,终端输出提示成功,说明初始化完成。输出的内容需要复制保存备用。

[root@k8s131 ~]# kubeadm init \> --image-repository registry.k8s.io \> --apiserver-advertise-address=192.168.228.131 \> --control-plane-endpoint=cluster-endpoint \> --kubernetes-version=v1.20.9 \> --service-cidr=10.96.0.0/16 \> --pod-network-cidr=192.168.23.0/24 [init] Using Kubernetes version: v1.20.9[preflight] Running pre-flight checks[WARNING Firewalld]: firewalld is active, please ensure ports [6443 10250] are open or your cluster may not function correctly[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.7. Latest validated version: 19.03[preflight] Pulling images required for setting up a Kubernetes cluster[preflight] This might take a minute or two, depending on the speed of your internet connection[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'[certs] Using certificateDir folder "/etc/kubernetes/pki"[certs] Generating "ca" certificate and key[certs] Generating "apiserver" certificate and key[certs] apiserver serving cert is signed for DNS names [cluster-endpoint k8s131 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.228.131][certs] Generating "apiserver-kubelet-client" certificate and key[certs] Generating "front-proxy-ca" certificate and key[certs] Generating "front-proxy-client" certificate and key[certs] Generating "etcd/ca" certificate and key[certs] Generating "etcd/server" certificate and key[certs] etcd/server serving cert is signed for DNS names [k8s131 localhost] and IPs [192.168.228.131 127.0.0.1 ::1][certs] Generating "etcd/peer" certificate and key[certs] etcd/peer serving cert is signed for DNS names [k8s131 localhost] and IPs [192.168.228.131 127.0.0.1 ::1][certs] Generating "etcd/healthcheck-client" certificate and key[certs] Generating "apiserver-etcd-client" certificate and key[certs] Generating "sa" key and public key[kubeconfig] Using kubeconfig folder "/etc/kubernetes"[kubeconfig] Writing "admin.conf" kubeconfig file[kubeconfig] Writing "kubelet.conf" kubeconfig file[kubeconfig] Writing "controller-manager.conf" kubeconfig file[kubeconfig] Writing "scheduler.conf" kubeconfig file[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Starting the kubelet[control-plane] Using manifest folder "/etc/kubernetes/manifests"[control-plane] Creating static Pod manifest for "kube-apiserver"[control-plane] Creating static Pod manifest for "kube-controller-manager"[control-plane] Creating static Pod manifest for "kube-scheduler"[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s[apiclient] All control plane components are healthy after 14.007116 seconds[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace[kubelet] Creating a ConfigMap "kubelet-config-1.20" in namespace kube-system with the configuration for the kubelets in the cluster[upload-certs] Skipping phase. Please see --upload-certs[mark-control-plane] Marking the node k8s131 as control-plane by adding the labels "node-role.kubernetes.io/master=''" and "node-role.kubernetes.io/control-plane='' (deprecated)"[mark-control-plane] Marking the node k8s131 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule][bootstrap-token] Using token: 4qn4kj.52saric9a3vqnk1w[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key[addons] Applied essential addon: CoreDNS[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/You can now join any number of control-plane nodes by copying certificate authoritiesand service account keys on each node and then running the following as root: kubeadm join cluster-endpoint:6443 --token 4qn4kj.52saric9a3vqnk1w \ --discovery-token-ca-cert-hash sha256:7f12181600006aeb62fb38bcb82582809a9ad1911e49065f1fd13f9c68c95774 \ --control-plane Then you can join any number of worker nodes by running the following on each as root:kubeadm join cluster-endpoint:6443 --token 4qn4kj.52saric9a3vqnk1w \ --discovery-token-ca-cert-hash sha256:7f12181600006aeb62fb38bcb82582809a9ad1911e49065f1fd13f9c68c95774 4.配置文件目录

按照上述的输出提示“To start using your cluster, you need to run the following as a regular user” 还需要需要在主节点执行下面的命令

mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config5.部署网络插件

按照上述的输出提示 You should now deploy a pod network to the cluster. Run “kubectl apply -f [podnetwork].yaml”,接下来还需要部署一个pod网络插件到master节点上。

输入命令kubectl get nodes -A,测试下主节点状态,返回NotReady是因为还没有部署网络插件。

[root@k8s131 ~]# kubectl get nodes -ANAME STATUS ROLES AGE VERSIONk8s131 NotReady control-plane,master 5h5m v1.20.9k8s支持多种网络插件,例如calico,安装前要先将它的编排文件下载到本地目录

curl https://docs.projectcalico.org/v3.20/manifests/calico.yaml -O 配置文件中找到以下内容

# - name: CALICO_IPV4POOL_CIDR# value: "192.168.0.0/16"将默认的192.168.0.0/16修改为--pod-network-cidr=指定的地址192.168.23.0/24,并解除注释

- name: CALICO_IPV4POOL_CIDR value: "192.168.23.0/24"在主节点执行命令,部署网络插件calico,部署过程需要联网下载镜像

kubectl apply -f calico.yaml[root@k8s131 opt]# kubectl apply -f calico.yaml configmap/calico-config createdcustomresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org createdclusterrole.rbac.authorization.k8s.io/calico-kube-controllers createdclusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers createdclusterrole.rbac.authorization.k8s.io/calico-node createdclusterrolebinding.rbac.authorization.k8s.io/calico-node createddaemonset.apps/calico-node createdserviceaccount/calico-node createddeployment.apps/calico-kube-controllers createdserviceaccount/calico-kube-controllers createdpoddisruptionbudget.policy/calico-kube-controllers created输入kubectl get pods -A查看网络插件pod部署情况

状态为ContainerCreating,Init说明正在下载部署

[root@k8s131 opt]# kubectl get pods -ANAMESPACE NAME READY STATUS RESTARTS AGEkube-system calico-kube-controllers-577f77cb5c-wpxh7 0/1 ContainerCreating 0 21mkube-system calico-node-7wb4z 0/1 Init:2/3 0 9m3skube-system coredns-76c6f6bbc9-4q5f9 0/1 ContainerCreating 0 5h41mkube-system coredns-76c6f6bbc9-nkdcl 0/1 ContainerCreating 0 5h41mkube-system etcd-k8s131 1/1 Running 1 5h41mkube-system kube-apiserver-k8s131 1/1 Running 1 5h41mkube-system kube-controller-manager-k8s131 1/1 Running 1 5h41mkube-system kube-proxy-nt5jf 1/1 Running 1 5h41mkube-system kube-scheduler-k8s131 1/1 Running 1 5h41m完成后状态均为Running

[root@k8s131 opt]# kubectl get pods -ANAMESPACE NAME READY STATUS RESTARTS AGEkube-system calico-kube-controllers-577f77cb5c-wpxh7 1/1 Running 0 24mkube-system calico-node-7wb4z 1/1 Running 0 12mkube-system coredns-76c6f6bbc9-4q5f9 1/1 Running 0 5h44mkube-system coredns-76c6f6bbc9-nkdcl 1/1 Running 0 5h44mkube-system etcd-k8s131 1/1 Running 1 5h45mkube-system kube-apiserver-k8s131 1/1 Running 1 5h45mkube-system kube-controller-manager-k8s131 1/1 Running 1 5h45mkube-system kube-proxy-nt5jf 1/1 Running 1 5h44mkube-system kube-scheduler-k8s131 1/1 Running 1 5h45m再次输入命令kubectl get nodes -A测试主节点状态,已经变为Ready,准备就绪,网络插件到此部署完成。

[root@k8s131 opt]# kubectl get nodes -ANAME STATUS ROLES AGE VERSIONk8s131 Ready control-plane,master 5h53m v1.20.9主节点至此初始化完成。

3.6 从节点加入集群

从节点加入集群的命令,在初始化主节点命令输出的内容中就有,在之前复制的输出内容中找到Then you can join any number of worker nodes by running the following on each as root:,并找到它下面的一行命令在每个从节点执行,该命令24小时内有效

kubeadm join cluster-endpoint:6443 --token 4qn4kj.52saric9a3vqnk1w \ --discovery-token-ca-cert-hash sha256:7f12181600006aeb62fb38bcb82582809a9ad1911e49065f1fd13f9c68c95774 如果令牌忘记了,或者超过了24小时,在master节点上执行下面的命令,生成新的令牌

kubeadm token create --print-join-command在两个从节点执行这个命令,执行后,以下提示,说明加入成功

[root@k8s132 ~]# kubeadm join cluster-endpoint:6443 --token bjme49.uhg7ubgjn2m16b76 --discovery-token-ca-cert-hash sha256:7f12181600006aeb62fb38bcb82582809a9ad1911e49065f1fd13f9c68c95774[preflight] Running pre-flight checks[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.7. Latest validated version: 19.03[WARNING Hostname]: hostname "k8s132" could not be reached[WARNING Hostname]: hostname "k8s132": lookup k8s132 on 192.168.228.2:53: server misbehaving[preflight] Reading configuration from the cluster...[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Starting the kubelet[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...This node has joined the cluster:* Certificate signing request was sent to apiserver and a response was received.* The Kubelet was informed of the new secure connection details.Run 'kubectl get nodes' on the control-plane to see this node join the cluster.回到主节点执行命令kubectl get nodes -A,查看节点状态,可以看到两个从节点了,但是状态是NotReady,此时执行kubectl get pods -A查看pods状态,发现是因为从节点的网络插件未初始化成功完成导致,此时需要耐心等待网络插件加载完成,以我的经验来说加载网络插件需要下载很久。

管理集群要靠主节点,kubectl命令只能在主节点执行

[root@k8s131 ~]# kubectl get nodes -ANAME STATUS ROLES AGE VERSIONk8s131 Ready control-plane,master 2d23h v1.20.9k8s132 NotReady <none> 6m49s v1.20.9k8s133 NotReady <none> 6m41s v1.20.9[root@k8s131 ~]# kubectl get pods -ANAMESPACE NAME READY STATUS RESTARTS AGEkube-system calico-kube-controllers-577f77cb5c-wpxh7 1/1 Running 1 2d18hkube-system calico-node-7wb4z 1/1 Running 1 2d18hkube-system calico-node-plcdp 0/1 Init:ImagePullBackOff 0 8m22skube-system calico-node-zwmrg 0/1 Init:ImagePullBackOff 0 8m30skube-system coredns-76c6f6bbc9-4q5f9 1/1 Running 1 2d23hkube-system coredns-76c6f6bbc9-nkdcl 1/1 Running 1 2d23hkube-system etcd-k8s131 1/1 Running 2 2d23hkube-system kube-apiserver-k8s131 1/1 Running 2 2d23hkube-system kube-controller-manager-k8s131 1/1 Running 2 2d23hkube-system kube-proxy-flrq9 1/1 Running 0 8m22skube-system kube-proxy-nt5jf 1/1 Running 2 2d23hkube-system kube-proxy-tcrjv 1/1 Running 0 8m30skube-system kube-scheduler-k8s131 1/1 Running 2 2d23h等到kubectl get pods -A全部变为Running,再次测试kubectl get nodes -A

[root@k8s131 ~]# kubectl get nodes -ANAME STATUS ROLES AGE VERSIONk8s131 Ready control-plane,master 3d v1.20.9k8s132 Ready <none> 23m v1.20.9k8s133 Ready <none> 22m v1.20.9所有节点状态都是Ready,至此,两个从节点加入了K8s集群并进入就绪状态,整个K8s集群安装完成!